It’s become quite clear to those of us in the SNIA Ethernet Storage Forum (ESF) that everyone loves a great debate. We’ve proved that with our “Great Storage Debates” webcast series which has had over 3,500 views in just a few months! Last month we had another friendly debate on FCoE vs. iSCSI vs. iSER. If you missed the live event, you can watch it now on-demand and download a pdf of the webcast slides. Our live audience asked a lot of interesting questions. As promised, here are answers to them all.

It’s become quite clear to those of us in the SNIA Ethernet Storage Forum (ESF) that everyone loves a great debate. We’ve proved that with our “Great Storage Debates” webcast series which has had over 3,500 views in just a few months! Last month we had another friendly debate on FCoE vs. iSCSI vs. iSER. If you missed the live event, you can watch it now on-demand and download a pdf of the webcast slides. Our live audience asked a lot of interesting questions. As promised, here are answers to them all.

Q. How often are iSCSI offload adapters used in customer environments as compared to software initiators? Can these adapters be used for all IP traffic or do they only run iSCSI?

A. iSCSI offload adapters are ideally suited for enabling high-performance storage access at up to 100Gbps data rates for business-critical applications, for example, latency-sensitive transactional applications and large-file business intelligence applications. iSCSi offload adapters typically also support offload of other storage protocols such as NVMe-oF, iSER, FCoE as well as regular Ethernet traffic using offload or non-offload means.

Q. What you’ve missed with iSCSI is Jumbo Frames. That payload size is one of the biggest advantages over Fibre Channel. The biggest problem with both FCoE and iSCSI is they build the networks too complex, with too many hops, without true redundant isolation. Best Practices with block based FC is to keep the host and storage as close to each other as possible. And to have separate isolated redundant networks/fabric.

A. The Jumbo Frame (JF) argument is quite contentious among iSCSI storage and network administrators, even beyond anything to do with Fibre Channel.

Considering that the performance advantages of JFs are minimal – only 3%-5% performance boost over default MTU sizes of 1500. In mixed workload environments (which dominate the Data Center application deployments), JFs simply do not provide the kind of benefits that people expect in real-world scenarios. The only time JFs can “push the needle,” so to speak, is when you have massively scaled systems with 100s or 1000s of devices, but this raises other issues.

One of those issues is that every device in the system needs to have JFs enabled. This can be something of a problem when systems get as large as they need to be in order to take advantage of JFs. Ensuring that every device is configured properly – especially over time, and especially when considering how iSCSI devices are added to networked environments – is a job that requires the coordination of the server/virtualization teams, the networking teams, and the storage teams. By and large, many people find QoS to be a more productive means of performance improvement for iSCSI systems than JFs.

Fibre Channel, on the other hand, has a maximum frame size of 2112 bytes. FCoE, then, only requires “baby jumbo” frames, for which the configuration is pushed from the switch to the end devices (~2.5k). What FC has that iSCSI does not have is the concept of “sequences” and “exchanges,” which ensure that the long-flow of frames (regardless of their size) are sent as an entity. So, regardless of what the frame size is (2.5k or 9k), the data flow is sent with consistency and low-jitter because of the way that the sequences and exchanges are handled.

The concern about “too complex” and “too many hops” is an interesting one, as Fibre Channel (and, correspondingly, FCoE) are deliberately kept as simple and straightforward as possible. A FC network, for instance, rarely goes beyond 2 hops (“hops” in FC are measured as the links between switches, whereas in Ethernet “hops” are measured as the switches themselves).

Logically, then, there is usually, at most, an edge-core-edge topology with a predeterministic path to be followed thanks to Fibre Channel’s FSPF routing algorithm.

iSCSI topologies, on the other hand, can be complex (as Ethernet topologies sometimes can be). For larger iSCSI environments, it is often recommended to isolate the storage traffic out into its own, simplified topology. iSCSI SANs that have grown organically, however, can sometimes struggle to be reined in over time.

Best practices for all storage is to keep it as close to the host/source as is reasonably possible, not just block. In backup scenarios for example, you want the storage far enough away to be safe from any catastrophe, but close enough to ensure recovery objectives. The design principle of keeping storage as close to the host is a common best practice, and as mentioned in the webinar it is important that architectural principles ensure high availability (HA) to compensate for the rigidity that block storage systems require to compensate for weaker ULP recovery mechanisms.

Q. Most servers today have enough compute power to not need offload adapters.

A. This statement might be true in some situations, but definitely not most. With more and more virtual machines being deployed on physical systems and new storage technologies such as SSDs, and NVMe devices which greatly lower latencies, servers are often CPU bound when moving or retrieving data from storage. Offloading storage related activities to an adapter frees the CPU and increases overall server performance.

Q. In which industry is each protocol (i.e. FCOE or ISCSI and iSER) widely used and where?

A. iSCSI is the most widely-supported Ethernet SAN protocol with native initiator support integrated into all the major operating systems and hypervisors, built-in RDMA for high performance offloaded implementations supporting up to 100Gbps and support across major storage platforms and is thus ideally suited for deployment across cloud and enterprise data center environments.

Q. Do iSCSI offload adapters provide the IPSec encryption, or is this done in software only solutions? Please answer from both initiator and target perspective.

A. Yes, iSCSI protocol offload adapters can optionally provide offload of IPSec encryption for both iSCSI (as well as NVMe-oF) initiator and target operation at data rates of up to 100 Gigabits-per-second. This results in overall higher server and target efficiency including power, cooling, memory, and CPU savings.

Q. Does iSER support direct or is a switch between them required?

A. A switch is not required.

Q. J, you left out the centralized management that Fibre Channel provides for FCoE as a positive.

A. I got there eventually! But you are correct, the Fibre Channel tools for a centralized management plane with the name server – regardless of the number of switches in the fabric – is a tremendous positive for FCoE/FC solutions at scale.

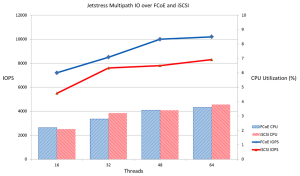

Q. Is multipath possible on the initiator with ISER and will it scale with high IOPs?

A. Yes. Mulitpath is possible on the initiator with iSER and scales with high IOPs.

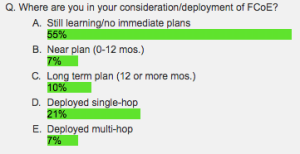

Q. FCoE has been around for a while, but I noticed that some storage vendors are dropping support for it. Do you still see a big future for FCoE?

A. As a protocol, FCoE has always been able to be used wherever and whenever needed. Almost all converged infrastructure systems use FCoE, for instance. Given that the key advantage of FCoE has been traffic/protocol consolidation, there is an extremely strong use case for FCoE at “the first hop” – that is, from the server to the first network switch.

Q. What is the MTU for iSER ?

A. iSER as a protocol that sits above the Layer 2 Data Link Layer, which is where the MTU is set. As a result, iSER will accept/accommodate any MTU setting that is configured at that layer. Please see the answer earlier about Jumbo Frames for more information.

Ready for more great storage debates? Our next one will be RoCE vs. iWARP on August 22, 2018. Save you place by registering here.

And you can check out our previous debates “File vs. Block vs. Object Storage” and “Fibre Channel vs. iSCSI” on-demand at your convenience too. Happy debating!