The SNIA Networking Storage Forum (NSF) recently hosted a live webcast, What Software Defined Storage Means for Storage Networking where our experts, Ted Vojnovich and Fred Bower explained what makes software defined storage (SDS) different from traditional storage. If you missed the live event, you can watch it on-demand at your convenience. We had several questions at the live event and here are our experts’ answers to them all:

Q. Are there cases where SDS can still work with legacy storage so that high priority flows, online transaction processing (OLTP) can use SAN for the legacy storage while not so high priority and backup data flows utilize the SDS infrastructure?

A. The simple answer is, yes. Like anything else, companies are using different methods and architectures to resolve their compute and storage requirements. Just like public cloud may be used for some non-sensitive/vital data and in-house cloud or traditional storage for sensitive data. Of course, this adds costs, so benefits need to be weighed against the additional expense.

Q. What is the best way to mitigate unpredictable network latency that can go out of the bounds of a storage required service level agreement (SLA)?

A. There are several ways to mitigate latency. Generally speaking, increased bandwidth contributes to better network speed because the “pipe” is essentially larger and more data can travel through it. There are other means as well to reduce latency such the use of offloads and accelerators. Remote Direct Memory Access (RDMA) is one of these and is being used by many storage companies to help handle the increased capacity and bandwidth needed in Flash storage environments. Edge computing should also be added to this list as it relocated key data processing and access points from the center of the network to the edge where it can be gathered and delivered more efficiently.

Q. Can you please elaborate on SDS scaling in comparison with traditional storage?

A. Most SDS solutions are designed to scale-out both performance and capacity to avoid bottlenecks whereas most traditional storage has always had limited scalability, scaling up in capacity only. This is because as a scale-up storage system begins to reach capacity, the controller becomes saturated and performance suffers. The workaround for this problem with traditional storage is to upgrade the storage controller or purchase more arrays, which can often lead to unproductive and hard to manage silos.

Q. You didn’t talk much about distributed storage management and namespaces (i.e. NFS or AFS)?

A. Storage management consists of monitoring and maintaining storage health, platform health, and drive health. It also includes storage provisioning such as creating each LUN /share/etc., or binding LUNs to controllers and servers. On top of that, storage management involves storage services like disk groups, snapshot, dedupe, replication, etc. This is true for both SDS and traditional storage (Converged Infrastructure and Hyper-Converged Infrastructure will leverage this ability in storage). NFS is predominately a non-Windows (Linux, Unix, VMware) file storage protocol while AFS is no longer popular in the data center and has been replaced as a file storage protocol by either NFS or SMB (in fact, it’s been a long time since somebody mentioned “AFS”).

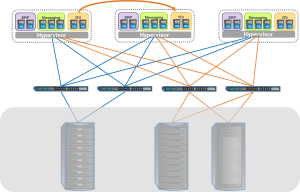

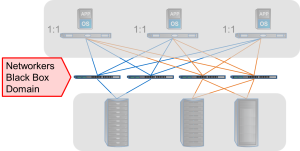

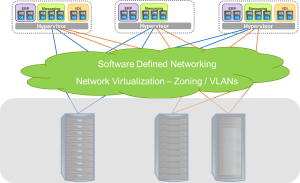

Q. How does SDS affect storage networking? Are SAN vendors going to lose customers?

A. SAN vendors aren’t going anywhere because of the large existing installed base which isn’t going quietly into the night. Most SDS solutions focus on Ethernet connectivity (as diagrams state) while traditional storage is split between Fibre Channel and Ethernet; InfiniBand is more of a niche storage play for HPC and some AI or machine learning customers.

Q. Storage costs for SDS are highly dependent on scale and replication or erasure code. An erasure coded multi-petabyte solution can be significantly less than a traditional storage solution.

A. It’s a processing complexity vs. cost of additional space tradeoff. Erasure coding is processing intense but requires less storage capacity. Making copies uses less processing power but consumes more capacity. It is true to say replicating copies uses more network bandwidth. Erasure coding tends to be used more often for storage of large objects or files, and less often for latency-sensitive block storage.

If you have more questions on SDS, let us know in the comment box.