Our recent SNIA Ethernet Storage Forum Webcast on How Ethernet RDMA Protocols iWARP and RocE Support NVMe over Fabrics generated a lot of great questions. We didn’t have time to get to all of them during the live event, so as promised here are the answers. If you have additional questions, please comment on this blog and we’ll get back to you as soon as we can.

Q. Are there still actual (memory based) submission and completion queues, or are they just facades in front of the capsule transport?

A. On the host side, they’re “facades” as you call them. When running NVMe/F, host reads and writes do not actually use NVMe submission and completion queues. That data just comes from and to RNIC RDMA queues. On the target side, there could be real NVMe submissions and completion queues in play. But the more accurate answer is that it is “implementation dependent.”

Q. Who places the command from NVMe queue to host RDMA queue from software standpoint?

A. This is managed by the kernel host software in code written to the NVMe/F specification. The idea is that any existing application that thinks it is writing to the existing NVMe host software will in fact cause the SQE entry to be encapsulated and placed in an RDMA send queue.

Q. You say “most enterprise switches” support NVMe/F over RDMA, I guess those are ‘new’ ones, so what is the exact question to ask a vendor about support in an older switch?

A. For iWARP, any switch that can handle Internet traffic will do. Mellanox and Intel have different answers for RoCE / RoCEv2. Mellanox says that for RoCE, it is recommended, but not required, that the switch support Priority Flow Control (PFC). Most new enterprise switches support PFC, but you should check with your switch vendor to be sure. Intel believes RoCE was architected around DCB. The name itself, RoCE, stands for “RDMA over Converged Ethernet,” i.e., Ethernet with DCB. Intel believes RoCE in general will require PFC (or some future standard that delivers equivalent capabilities) for efficient RDMA over Ethernet.

Q. Can you comment on when one should use RoCEv2 vs. iWARP?

A. We gave a high-level overview of some of the deployment considerations on slide 30. We refer you to some of the vendor links on slide 32 for “non-vendor neutral” perspectives.

Q. If you take RDMA out of equation, what is the key advantage of NVMe/F over other protocols? Is it that they are transparent to any application?

A. NVMe/F allows the application to bypass the SCSI stack and uses native NVMe commands across a network. Most other block storage protocols require using the SCSI protocol layer, translating the NVMe commands into SCSI commands. With NVMe/F you also gain parallelism, simplicity of the command set, a separation between administrative sessions and data sessions, and a reduction of latency and processing required for NVMe I/O operations.

Q. Is ROCE v1 compatible with ROCE v2?

A. Yes. Adapters speaking RoCEv2 can also maintain RDMA connections with adapters speaking RoCEv1 because RoCEv2 ports are backwards interoperable with RoCEv1. Most of the currently shipping NICs supporting RoCE support both RoCEv1 and RoCEv2.

Q. Are RoCE and iWARP the only way to use Ethernet as a fabric for NMVe/F?

A. Initially yes; only iWARP and RoCE are supported for NVMe over Ethernet. But the NVM Express Working Group is also targeting FCoE. We should have probably been clearer about that, though it is noted on slide 11.

Q. What about doing NVMe over Fibre Channel? Is anyone looking at, or doing this?

A. Yes. This is not in scope for the first spec release, but the NVMe WG is collaborating with the FCIA on this. So NVMe over Fibre Channel is expected as another standard in the near future, to be promoted by T11.

Q. Do RoCE and iWARP both use just IP addresses for management or is there a higher level addressing mechanism, and management?

A. RoCEv2 uses the RoCE Connection Manager, and iWARP uses TCP connection management. They both use IP for addressing.

Q. Are there other fabrics to run NVMe over fabrics? Can you do this over OmniPath or Infiniband?

A. InfiniBand is in scope for the first spec release. Also, there is a related effort by the FCIA to support NVMe over Fibre Channel in a standard that will be promoted by T11.

Q. You indicated NVMe stack is in kernel while RDMA is a user level verb. How are NVMe SQ/ CQ entries transferred from NVMe to RDMA and vice versa? Also, could smaller transfers in NVMe (e.g. SGL of 512B) combined to larger sizes before being sent to RDMA entries and vice versa?

A. NVMe/F supports multiple scatter gather entries to combine multiple incontinuous transfers, nevertheless, the protocol doesn’t support chaining multiple NVMe commands on the same command capsule. A command capsule contains only a single NVMe command. Please also refer to slide 18 from the presentation.

Q. 1) How do implementers and adopters today test NVMe deployments? 2) Besides latency, what other key performance indicators do implements and adopters look for to determine whether the NVMe deployment is performing well or not?

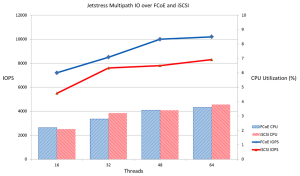

A. 1) Like any other datacenter specification, testing is done by debugging, interop testing and plugfests. Local NVMe is well supported and can be tested by anyone. NVMe/F can be tested using pre-standard drivers or solutions from various vendors. UNH-IOH is an organization with an excellent reputation for helping here. 2) Latency, yes. But also sustained bandwidth, IOPS, and CPU utilization, i.e., the “usual suspects.”

Q. If RoCE CM supports ECN, why can’t it be used to implement a full solution without requiring PFC?

A. Explicit Congestion Notification (ECN) is an extension to TCP/IP defined by the IETF. First point is that it is a standard for congestion notification, not congestion management. Second point is that it operates at L3/L4. It does nothing to help make the L2 subnet “lossless.” Intel and Mellanox agree that generally speaking, all RDMA protocols perform better in a “lossless,” engineered fabric utilizing PFC (or some future standard that delivers equivalent capabilities). Mellanox believes PFC is recommended but not strictly required for RoCE, so RoCE can be deployed with PFC, ECN, or both. In contrast, Intel believes that for RoCE / RoCEv2 to deliver the “lossless” performance users expect from an RDMA fabric, PFC is in general required.

Q. How involved are Ethernet RDMA efforts with the SDN/OCP community? Is there a coming example of RoCE or iWarp on an SDN switch?

A. Good question, but neither RoCEv2 nor iWARP look any different to switch hardware than any other Ethernet packets. So they’d both work with any SDN switch. On the other hand, it should be possible to use SDN to provide special treatment with respect to say congestion management for RDMA packets. Regarding the Open Compute Project (OCP), there are various Ethernet NICs and switches available in OCP form factors.

Q. Is there a RoCE v3?

A. No. There is no RoCEv3.

Q. iWARP and RoCE both fall back to TCP/IP in the lowest communication sense? So they are somewhat compatible?

A. They can speak sockets to each other. In that sense they are compatible. However, for the usage model we’re considering here, NVMe/F, RDMA is required. Because of L3/L4 differences, RoCE and iWARP RNICs cannot speak RDMA to each other.

Q. So in case of RDMA (ROCE or iWARP), the NVMe controller’s fabric port is Ethernet?

A. Correct. But it must be RDMA-enabled Ethernet.

Q. What if I am using soft RoCE, do I still need an RNIC?

A. Functionally, soft RoCE or soft iWARP should work on a regular NIC. Whether the performance is sufficient to keep up with NVMe SSDs without the hardware offloads is a different matter.

Q. How would the NVMe controller know that a command is placed in the submission queue by the Fabric host driver? Is the fabric host driver responsible for notifying the NVMe controller through remote doorbell trigger or the Fabric target driver should trigger the doorbell?

A. No separate notification by the host required. The fabric’s host driver simply sends a command capsule to notify its companion subsystem driver that there is a new command to be processed. The way that the subsystem side notifies the backend NVMe drive is out of the scope of the protocol.

Q. I am chair of ETSI NFV working group on NFV acceleration. We are working on virtual RDMA and how VM can benefit from hardware independent RDMA. One corner stone of this is virtual-RDMA pseudo device. But there is not yet consensus on minimal set of verbs to be supported: Do you think this minimal verb set can be identified? Last, the transport address space is not consistent between IB, Ethernet. How supporting transport independent RDMA?

A. You know, the NVM Express Working Group is working on exactly these questions. They have to define a “minimal verb set” since NVMe/F generates the verbs. Similarly, I’d suggest looking to the spec to see how they resolve the transport address space differences.

Q. What’s the plan for Linux submission of NVMe over Fabric changes? What releases are being targeted?

A. The Linux Driver WG in the NVMe WG expects to submit code upstream within a quarter of the spec being finalized. At this time it looks like the most likely Linux target will be kernel 4.6, but it could end up being kernel 4.7.

Q. Are NVMe SQ/CQ transferred transparently to RDMA Queues or can they be modified?

A. The method defined in the NVMe/F specification entails a transparent transfer. If you wanted to modify an SQE or CQE, do so before initiating an NVMe/F operation.

Q. How common are rNICs for recent servers? i.e. What’s a quick check I can perform to find out if my NIC is an rNIC?

A. rNICs are offered by nearly all major server vendors. The best way to check is to ask your server or NIC vendor if your NIC supports iWARP or RoCE.

Q. This is most likely out of the scope of this talk but could you perhaps share about 30K level on the differences between “NVMe controller” hardware versus “NVMeF” hardware. It’s most likely a combination of R-NIC+NVMe controller, but would be great to get your take on this.

A goal of the NVMe/F spec is that it work with all existing NVMe controllers and all existing RoCE and iWARP RNICs. So on even a very low level, we can say “no difference.” That said, of course, nothing stops someone from combining NVMe controller and rNIC hardware into one solution.

Q. Are there any example Linux targets in the distros that exercise RDMA verbs? An iWARP or iSER target in a distro?

A. iSER allows this using a LIO or TGT SCSI target.

Q. Is there a standard or IP for RDMA NIC?

A. The various RNICs are based on IBTA, IETF, and IEEE standards are shown on slide 26.

Q. What is the typical additional latency introduced comparing NVMe over Fabric vs. local NVMe?

A. In the 2014 IDF demo, the prototype NVMe/F stack matched the bandwidth of local NVMe with a latency penalty of only 8 µs over a local iWARP connection. Other demonstrations have shown an added fabric latency of 3 µs to 15 µs. The goal for the final spec is under 10 µs.

Q. How well is NVME over RDMA supported for Windows ?

A. It is not currently supported, but then the spec isn’t even finished. Contract Microsoft if you are interested in their plans.

Q. RDMA over Ethernet would not support Layer 2 switching? How do you deal with TCP over head?

A. L2 switching is supported by both iWARP and RoCE. Both flavors of RNICs have MAC addresses, etc. iWARP had to deal with TCP/IP in hardware, a TCP/IP Offload Engine or TOE. The TOE used in an iWARP RNIC is significantly constrained compared to a general purpose TOE and therefore can operate with very high performance. See the Chelsio website for proof points. RoCE does not use TCP so does not need to deal with TCP overhead.

Q. Does RDMA not work with fibre channel?

A. They are totally different Transports (L4) and Networks (L3). That said, the FCIA is working with NVMe, Inc. on supporting NVMe over Fibre Channel in a standard to be promoted by T11.

Update: If you missed the live event, it’s now available on-demand. You can also download the webcast slides.